Introduction

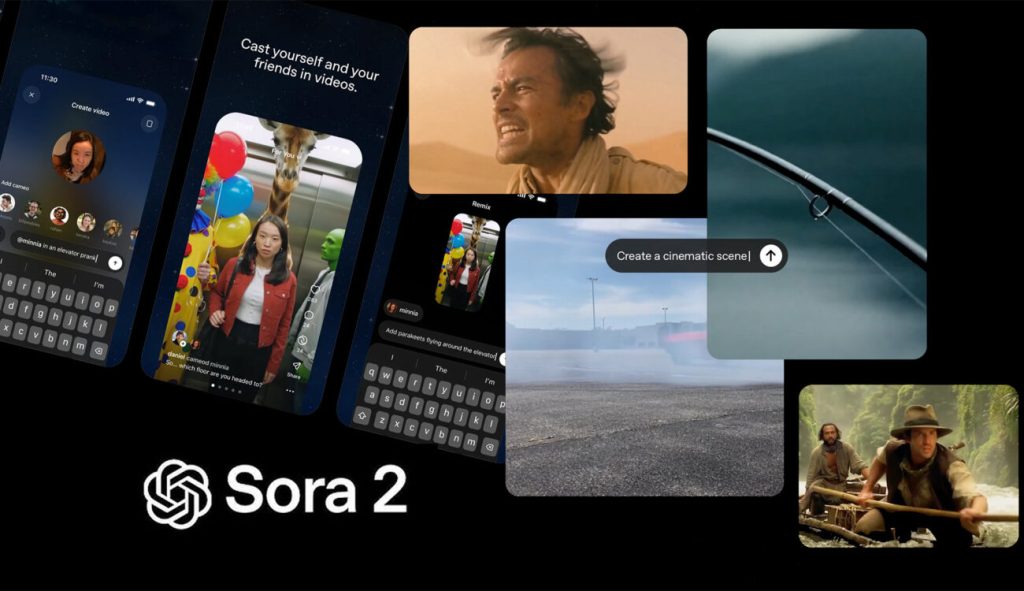

The rapid growth of artificial intelligence has transformed how digital content is created, especially in the field of video production. Among the most remarkable innovations in this space is Sora 2, an advanced AI video generation model designed to convert text prompts into visually rich and realistic motion sequences. As content demand continues to increase across industries such as entertainment, marketing, and education, tools like Sora 2 represent a major shift toward faster, scalable, and automated video creation workflows.

What makes Sora 2 particularly interesting is not just its ability to generate moving images, but its capacity to understand context, simulate physical behavior, and construct cohesive narratives. Unlike earlier text-to-video AI systems that focused mainly on short clips or simple motion, Sora 2 introduces more advanced scene awareness, longer timeline consistency, and cinematic storytelling capabilities. This progression places Sora 2 at the center of discussions about the future of generative AI video, especially as creators seek tools that combine automation with professional-level results.

See more: https://prol5review.com/home

The Evolution from Early Video AI to Sora 2

The development of AI video generation technology has been gradual, starting with simple frame interpolation tools and evolving into modern generative video AI systems capable of synthesizing entirely new visual content. Early tools focused on enhancing existing footage rather than creating new scenes. However, the arrival of Sora 2 signals a significant leap forward, demonstrating how neural networks can generate full-motion sequences from descriptive language.

What distinguishes Sora 2 from its predecessors is its ability to maintain temporal coherence across longer sequences. In earlier models, objects might change shape, shift unexpectedly, or lose continuity across frames. With Sora 2, improvements in deep learning architectures allow for smoother motion and more consistent visual storytelling. These advancements help creators move beyond experimental clips toward fully structured narratives powered by AI video synthesis.

Another notable change in the transition to Sora 2 is the scale of training data used. By learning from diverse visual patterns and motion scenarios, Sora 2 can generate more complex environments, ranging from natural landscapes to urban scenes. This level of adaptability reflects how machine learning models continue to evolve as computing power increases and datasets expand.

Core Technology Behind Sora 2

At the heart of Sora 2 lies a sophisticated neural architecture designed to process both language and visual information simultaneously. This dual-processing capability enables Sora 2 to interpret prompts not just as text, but as conceptual instructions that guide visual composition. The integration of deep learning and transformer-based AI models allows the system to translate written descriptions into dynamic sequences of frames.

One of the core innovations behind Sora 2 involves the use of temporal diffusion models. These models generate video frames gradually while maintaining logical continuity between them. Unlike static image generators, Sora 2 must predict not only what objects look like but also how they move and interact over time. This makes video diffusion AI far more computationally demanding than traditional image generation models.

Another key component of Sora 2 is its spatial reasoning system, which helps determine object relationships within scenes. For example, when generating motion involving vehicles, people, or environmental effects, Sora 2 calculates movement patterns that appear natural. This ability highlights the growing sophistication of AI-powered video engines, which increasingly mimic real-world physics and camera behaviors.

Understanding Prompt-to-Video Intelligence

Prompt interpretation is one of the defining strengths of Sora 2, enabling users to describe scenes using natural language. The prompt-to-video workflow begins when a user writes a description, specifying elements such as location, lighting, camera angles, and actions. Sora 2 then processes this information and translates it into visual sequences that align with the intended narrative.

The effectiveness of Sora 2 depends heavily on prompt clarity and structure. Detailed prompts often produce more accurate results because they provide contextual signals that guide scene construction. For instance, specifying environmental conditions or character behavior helps Sora 2 generate sequences that feel coherent and purposeful. This highlights the importance of prompt engineering in maximizing the performance of AI video tools.

Another advantage of Sora 2 is its ability to interpret abstract or creative prompts. Users can describe imaginative scenarios that may not exist in reality, and Sora 2 will attempt to visualize them convincingly. This flexibility makes generative video AI an appealing tool for storytelling, visual experimentation, and creative exploration across various disciplines.

Realistic Physics and Scene Awareness

One of the most impressive features of Sora 2 is its simulation of physical movement within generated scenes. Realistic motion is essential for believable video output, and Sora 2 incorporates advanced physics modeling to ensure that objects behave logically. This includes gravity effects, collision responses, and environmental interactions.

Scene awareness also plays a major role in the performance of Sora 2. The system evaluates relationships between objects, ensuring that motion paths align with visual expectations. For example, if a character walks across a surface, Sora 2 calculates foot placement and body balance to create believable movement. Such capabilities demonstrate how AI motion modeling continues to evolve toward higher realism.

Additionally, Sora 2 improves consistency across multi-frame sequences by maintaining object identity throughout the video. Earlier video generation AI models struggled with continuity, often producing flickering artifacts or inconsistent shapes. By addressing these limitations, Sora 2 enhances visual reliability and supports more complex scene compositions.

Cinematic Quality and Visual Storytelling

Cinematic storytelling requires careful control over lighting, camera movement, and scene transitions. Sora 2 incorporates visual design principles that allow it to produce sequences resembling professionally filmed footage. This includes dynamic camera angles, depth-of-field effects, and controlled motion pacing.

One of the notable strengths of Sora 2 is its ability to simulate cinematic composition. By analyzing prompt details, the system determines how to frame subjects and position virtual cameras. This capability brings AI video generation closer to traditional filmmaking processes, enabling creators to design scenes without physical equipment.

Another important element of Sora 2 is its support for visual narrative flow. Instead of generating isolated clips, Sora 2 can produce sequences that connect logically from one scene to the next. This makes it particularly valuable for storytelling applications where continuity and emotional pacing are essential components of the final output.

Multi-Scene Narrative Generation

Modern video production often requires multiple scenes that unfold in sequence. Sora 2 addresses this challenge by supporting extended timelines and scene transitions within a single generation process. This allows users to create short films, promotional videos, or visual explanations using only text instructions.

The multi-scene capability of Sora 2 relies on memory retention within its neural architecture. By tracking previously generated frames, the system maintains visual consistency between scenes. This ensures that characters, objects, and environments remain recognizable throughout the sequence, improving the realism of AI-generated video content.

Another benefit of multi-scene generation in Sora 2 is workflow efficiency. Traditional video editing requires manual transitions, while Sora 2 automates these processes. This significantly reduces production time and enables creators to focus on storytelling rather than technical assembly tasks.

Practical Use Cases Across Industries

The adoption of Sora 2 extends beyond entertainment into fields such as marketing, education, and product design. Businesses can use AI video tools to create promotional materials quickly, reducing reliance on expensive filming equipment. This accessibility allows smaller organizations to compete with larger companies in visual marketing campaigns.

In education, Sora 2 offers new possibilities for visual learning. Teachers and content creators can generate educational videos that illustrate complex concepts through animation and motion. This approach enhances comprehension by combining visual storytelling with informative narration, demonstrating the potential of AI-powered learning tools.

Another promising application of Sora 2 involves prototyping and concept visualization. Designers can generate simulated environments to test ideas before building physical models. By accelerating the design process, Sora 2 contributes to more efficient product development cycles across multiple industries.

Workflow Integration for Creators

Integrating Sora 2 into existing production workflows requires careful planning, especially for teams accustomed to traditional editing software. Many creators use AI video platforms alongside tools for audio editing, color grading, and visual effects. This hybrid workflow combines automation with manual refinement to achieve professional-quality results.

One advantage of Sora 2 integration is the reduction of repetitive tasks. For example, generating background footage or environmental transitions can be automated, freeing time for creative decision-making. This aligns with the broader trend of AI-assisted production, where machines handle technical tasks while humans focus on storytelling.

Another important aspect of workflow integration is collaboration. Teams using Sora 2 can experiment with multiple versions of scenes quickly, enabling faster feedback cycles. This flexibility enhances productivity and encourages innovation in video design projects.

Current Limitations and Ethical Considerations

Despite its advanced capabilities, Sora 2 still faces technical limitations that developers continue to address. For instance, extremely complex scenes may require additional computational resources, leading to longer generation times. These performance challenges highlight the ongoing need for hardware optimization in AI video systems.

Ethical considerations also play a significant role in the development of Sora 2. As generative AI becomes more realistic, concerns arise regarding authenticity and content misuse. Developers must implement safeguards that prevent harmful applications while preserving creative freedom.

Another limitation involves interpretational accuracy. While Sora 2 can process detailed prompts, ambiguous descriptions may result in unexpected outputs. This reinforces the importance of clear instructions and responsible use of AI video generation technology.

The Future of AI Video Models

Looking ahead, the future of Sora 2 and similar technologies appears promising. Advances in computing power and neural network design will likely improve rendering speed and visual quality. As AI video generation evolves, longer and more complex narratives will become easier to produce.

Another anticipated development involves interactive video creation. Future versions of Sora 2 may support real-time adjustments, allowing users to modify scenes dynamically. This capability would transform digital storytelling, enabling creators to experiment with multiple visual outcomes instantly.

Ultimately, the continued refinement of Sora 2 signals a broader shift toward automated creative workflows. As artificial intelligence becomes more integrated into production pipelines, the boundary between imagination and execution will continue to shrink, redefining how video content is conceptualized and delivered.

Conclusion

The emergence of Sora 2 marks a pivotal moment in the evolution of AI video generation technology. By combining advanced neural architectures with cinematic storytelling principles, Sora 2 demonstrates how artificial intelligence can transform creative workflows. From realistic motion simulation to multi-scene narrative generation, the system provides tools that simplify complex production processes while maintaining visual quality.

While challenges remain in areas such as performance optimization and ethical governance, the overall trajectory of Sora 2 suggests a future where video creation becomes more accessible and efficient. As industries continue to explore the capabilities of generative video AI, Sora 2 stands as a strong example of how technology can empower creators to produce compelling visual content with minimal resources.

Follow us on Facebook: https://www.facebook.com/share/1CFA8qxACT/